Why Two Identical Tanks Don't Behave the Same | Lab Wizard

Table of Contents

Why Two “Identical” Tanks Don’t Behave the Same

Question this article answers: If two lines use the same chemistry, the same rectifier program, and the same procedures, why can one tank hit spec while another produces dull deposits, thickness drift, or extra rework?

Short answer: A plating tank is not defined by the recipe alone. It is defined by everything that touches the bath and the load: heat and circulation, anode and rack condition, tank dimensions and accessories, and how people load and run the line. Those factors stack. Matching the paper parameters does not recreate the same effective process in two different boxes.

📋 When the bath matches but the parts do not

A plating shop runs five barrel nickel lines built to the same drawing. Same rectifier model, same chemistry supplier, same operating procedures. The operator pulls samples from Tank 2 and Tank 5 on the same shift.

The chemistry readings match within tolerance. Both tanks show stable temperature and pH. Yet parts from Tank 2 consistently meet brightness targets, while parts from Tank 5 need a second dip or fail cosmetic inspection.

The chemistry is the same. The settings are the same. The tanks were installed the same way. Still, the outputs differ.

This is not automatically a bad analysis or a hidden equipment failure. It is what happens when many small physical differences add up to different effective processing conditions at the part, even when bulk bath parameters agree.

⚙️ Where identical becomes different

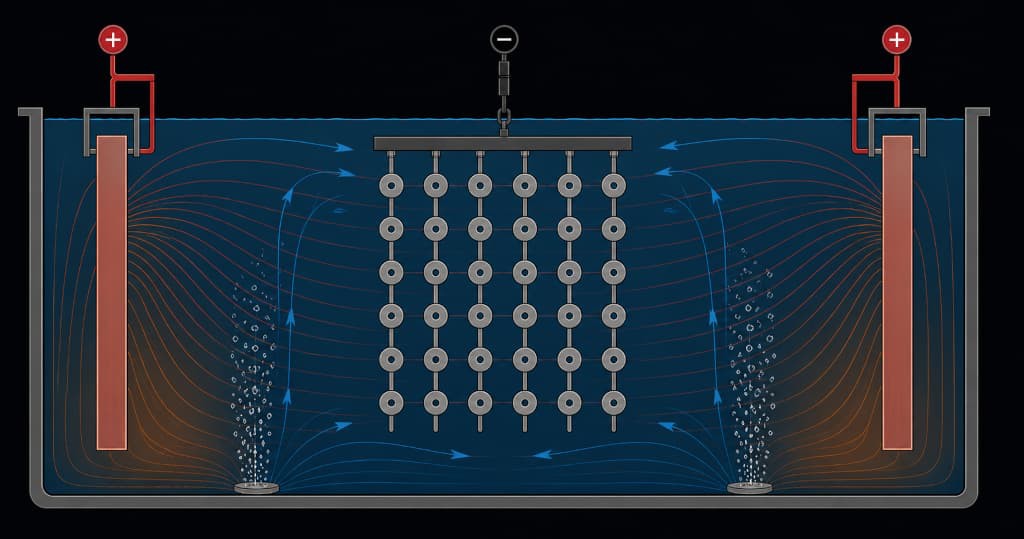

Every tank is a three dimensional reactor: solution volume, heat input, mixing, and the mechanical path from rectifier to rack or barrel. The electrochemical story is the same in principle everywhere. The inputs that actually reach the work are rarely identical from tank to tank.

Temperature is never perfectly uniform. Bottom heaters, room drafts, and bath level change how warm the solution is from surface to floor and from wall to center. That affects reaction rates, additive behavior, and how gas escapes from the deposit. A single thermometer location does not describe the whole volume.

Anodes and electrical hardware age and sit differently. Count and type may match on paper; spacing, alignment, coating condition, and bus connection health often do not. Two tanks can show the same amp readout while delivery to the load differs because of maintenance state and geometry, not because operators failed to set the rectifier.

Agitation sets who gets fresh solution. Air, circulation, and barrel rotation move depleted solution away from surfaces and bring makeup chemistry and heat into play. Internal dimensions, baffles, diffuser placement, and load shape steer flow. Identical equipment labels on two air rings do not guarantee identical mixing in two different tank shells.

Loading decides what actually runs in the bath. Fill level, orientation, contact quality, and rotation speed change exposure time and which surfaces see fresh solution. The same part number can be run with different effective handling between tanks or between shifts.

These effects combine. A slightly colder corner, a weaker mixing zone, and a heavier load do not average out in a simple way. The result is that each tank settles into its own normal over time: not random noise, but a fingerprint of layout, maintenance, and practice.

Variation between nominally identical tanks is not a moral failure of the crew. It is an expected outcome of running the same recipe in different physical systems.

Key takeaway: Treat each tank as its own asset with its own baseline. Shop-wide averages and a single “standard” program often hide offsets that are stable and predictable once you split the data by tank.

Implementation tip: Plot trend data per tank before you blend tanks into one chart. Offsets between tanks are often invisible in a combined average.

🔍 How to recognize differences in operation

Stable differences between tanks show up as different baselines, not only scatter.

They rarely arrive as a single alarm. They show up as patterns people notice but struggle to document.

Within one tank, first parts off the line can differ from last parts. If the start of a barrel run looks different from the end, the tank has an internal gradient: position in the load, stratification, or depletion along the cycle. That is the tank’s behavior during the run, not a one-off mistake at the load station.

The same part and program in two tanks produces two outcomes. Tank 2 holds brightness; Tank 5 runs dull. The operator nudges current or time on Tank 5 and gets a short-term fix while the underlying offset remains.

A correction that helps one tank can hurt another. Additions or parameter moves tuned for one tank’s baseline can push a second tank out of its window because the baselines were never the same.

Process history splits by tank. When you track efficiency, voltage stability, or effective deposition over time, each tank establishes its own level. That pattern is different from ordinary measurement noise; for how to separate stable offsets from noise, see signal vs noise in process data. The baselines are not identical even when the tanks were bought as the same model.

The table below is illustrative. It shows how five nominally identical tanks can settle at different operating fingerprints under similar nominal settings.

Typical baseline variation between nominally identical tanks

Illustrative values: same recipe and design can still yield different stable fingerprints per tank

| Metric | Tank 1 | Tank 2 | Tank 3 | Tank 4 | Tank 5 |

|---|---|---|---|---|---|

| Deposition rate (µm/hr) | 12.4 | 12.1 | 11.8 | 12.3 | 11.5 |

| Average voltage (V) | 6.2 | 6.4 | 6.1 | 6.3 | 6.7 |

| Current efficiency (%) | 94.2 | 93.1 | 91.8 | 93.8 | 89.5 |

| Temp gradient (°C, top to bottom) | 0.8 | 1.2 | 1.5 | 0.9 | 2.1 |

| Anode spacing spread (mm, typical) | ±3 | ±5 | ±7 | ±4 | ±9 |

Note: Your numbers will differ. The lesson is the pattern of separation between tanks, not the exact cells.

Trend charts expose the pattern. When you plot one metric with a separate series per tank over several weeks, you usually see bands that do not overlap. The spread between tanks is often larger than the variation inside a single tank. That shape means asset-level factors are dominating, not a single mysterious “bad day.”

Bath temperature and chemistry interact with that physical picture; for how pH, conductivity, and temperature behave as a system in real tanks, see pH, conductivity & temperature control in plating and wet process baths.

📉 What this costs the operation

Rework and scrap follow the weak lines. When one tank routinely runs marginal, that tank drives touch-up, replate, or scrap. Cost multiplies across chemistry, energy, labor, and cycle time.

Improvements do not copy blindly between tanks. A time or current change validated on one tank may underplate or burn on another when physical baselines differ. The shop cannot assume one optimized card works everywhere without evidence per tank.

Training becomes tribal. Experienced operators carry undocumented offsets (“Tank 5 needs a little more time”). New hires relearn the same losses until someone writes down per tank expectations.

Audits get heavier. When outcomes differ by tank, you must show each asset is understood and controlled on its own evidence trail. That is more history, more charts, and clearer assignment of limits to the right tank.

Capacity follows the slow lane. If one tank needs materially longer time to meet the same cosmetic or thickness outcome, effective throughput is capped there. Faster tanks do not cancel that when quality rules the release decision.

❌ What operators and managers get wrong

❌ Using chemistry alone to “sync” tanks. If both baths are in range on analysis, chasing one tank’s appearance by additions often moves chemistry away from where either tank runs best. Fix physical drivers when indicators point there first.

❌ Extending time instead of addressing the offset. Longer cycles buy thickness or cosmetics at higher energy and lower schedule margin. They do not fix a cold zone, a dead mixing corner, or a contact issue.

❌ Calling every difference random. Stable offsets between tanks are systematic. They deserve different responses than noise inside one tank.

❌ Assuming newer means aligned. New equipment still has its own installation, vendor tolerances, and maintenance path. Differences may simply be different, not automatically smaller.

❌ Monitoring all tanks against one generic limit. When tanks have different normals, one set of thresholds creates false comfort on one line and false alarms on another. Each tank needs its own baseline and alert logic; for how to turn that into action rules, see when monitoring should turn into action.

🧰 How to manage variation between tanks

Lead with what is true for each asset, not only what the master recipe says.

Step 1: Establish baselines per tank. Run a standard test load or coupon protocol in each tank under controlled conditions. Capture chemistry, electrical settings, thickness or weight gain, and visual criteria. Repeat until each tank has a documented normal.

Step 2: Record the physical profile. Anode type, count, spacing, alignment notes, heating layout, agitation hardware and placement, tank dimensions, baffling, and major maintenance events. These are the levers behind baseline offsets.

Step 3: Approve parameters per tank where needed. If two tanks need different time or current to hit the same functional outcome, document that as controlled variance tied to data, not verbal habit.

Step 4: Monitor each tank against its own history. Alerts should fire when a tank drifts from its baseline, not when it differs from another tank’s number without context.

Step 5: Tie monitoring to chemistry and operations. Data explains movement; bath management holds the solution; loading and contact discipline close the loop.

Implementation tip: Plot one metric such as efficiency or effective deposition weekly with a separate line per tank. Within a few weeks, offsets usually become obvious enough to document. Platforms that keep per tank series and tasks in one place, such as Lab Wizard Cloud, reduce copying errors and missed drift when you run many lines in parallel.

Related Resources

- When monitoring should turn into action

- Signal vs noise in process data

- Stable systems don’t require heroics

- pH, conductivity & temperature control in plating and wet process baths

- Leading vs. lagging indicators in plating quality

External links

- NIST: Interpreting control charts: Framework for detecting process variation patterns in control chart data

- ASQ: Variation (common vs special cause): Random versus systematic variation in quality terms

- AIAG: Statistical process control: SPC practice in manufacturing environments