Why Sampling Frequency Matters More Than You Think | Lab Wizard

Table of Contents

📋 When the charts look fine but the parts do not

The barrel plating run finished on time. The operator recorded voltage, current, and temperature at the standard intervals. Every reading fell within control limits. The control charts looked stable. The parts came off the line, and the quality lab measured plating thickness variation that exceeded the customer specification by 30 percent.

The data never showed anything wrong. The parts told a different story.

Sparse snapshots can make a drifting process look steady on paper.

This is not a failure of data collection. The operator followed procedure. The instruments were calibrated. The control limits were appropriate. The problem was something subtler: the sampling schedule created an illusion of stability that hid a genuine process deviation. The data was correct at every point where it existed, and that correctness was exactly what made it misleading.

When a batch fails under these conditions, the investigation usually turns to chemistry, equipment, or operator technique. It rarely turns to the timing of the data. Yet the gap between data points is often where the real story lives.

Core point: Your sampling interval decides what exists in the record, and what never gets written down.

⚙️ Why the gap between samples is where deviations hide

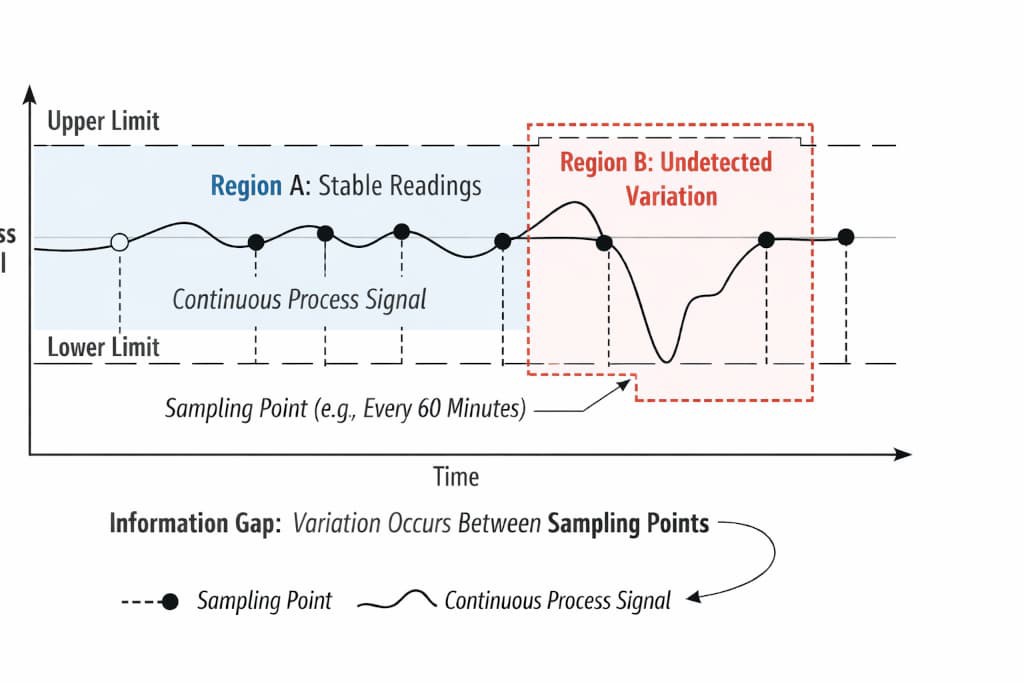

A continuous plating process does not produce continuous data unless you instrument it to do so. What you collect is a series of discrete snapshots taken at fixed intervals. Between each snapshot, the process is running, changing, and potentially deviating, but no record of that time exists in your data.

This creates a fundamental asymmetry. You are evaluating the stability of a continuous system using discrete observations, and the intervals between those observations determine what you can and cannot see.

Think of it as a strobe light effect. When you illuminate a moving object at regular intervals, you see its position at each flash. If the object moves quickly between flashes and returns to a similar position, the sequence of positions can look like steady motion. But between those positions, the object may have traveled a much larger distance, encountered an obstacle, or changed direction entirely. Your data would show nothing because you did not capture what happened between the flashes.

The plating process operates the same way. A rectifier current spike that lasts five minutes, a temperature deviation during a bath refill, a sudden change in part loading that shifts current distribution, a chemical depletion that builds between replenishment cycles; any of these can happen during the gap between samples and leave no trace in your records.

Between flashes, the process runs without a record; whatever happens there is invisible to your charts.

Key Takeaway: Stable data does not prove a stable process. It proves that the process was stable at the moments you looked.

The relationship between sampling interval and detection probability is not abstract. It is a measurable function. The longer your sampling interval relative to the duration of process deviations, the lower the probability that any given deviation will be captured. This is true regardless of how accurate your instruments are, how well your control limits are set, or how carefully your operators follow procedure.

When deviations are shorter than your sampling interval, detection requires them to overlap with a sampling moment. When deviations are longer, they become easier to capture. When deviations are much longer than your interval, they are nearly certain to be detected. But in the range where deviation duration approaches or falls below your sampling interval, detection becomes probabilistic rather than certain.

This probabilistic detection is the source of the illusion. Your process is generating deviations, but your sampling schedule is catching only a fraction of them. The ones that go undetected never appear on your charts. What remains is a dataset that looks stable, even though the underlying process is not.

What the data shows: Rectifier current at 245A at 8:00 AM. Current at 248A at 9:00 AM. Current at 246A at 10:00 AM. All readings within control limits. Process appears stable.

What actually happened: Between 8:15 AM and 8:45 AM, the rectifier experienced a sustained current spike to 270A due to a rack contact issue that was not resolved until the 9:00 AM reading. The parts plated during those thirty minutes received excess current that did not appear in any recorded data point.

The discrepancy between these two descriptions is the sampling gap in action. Your data is accurate. Your process was not stable. Both statements are true simultaneously, and the gap between them exists because of how frequently you sample.

🔍 How the sampling gap shows up in real operations

The mechanism described above manifests in your operation through recognizable patterns. The key is learning to distinguish between genuinely stable processes and processes that merely appear stable because of your sampling schedule.

If the failure timing does not line up with the data, suspect the interval before you suspect the chemistry.

One of the most common indicators is the timing of failures. When a batch fails for a parameter that your monitoring system should have caught, but the data shows no warning, the sampling frequency is a first-order suspect. The data did not lie. It simply did not capture what happened.

Another pattern appears when you increase sampling frequency and suddenly see problems you did not know existed. This does not mean the process became unstable at the moment you sampled more frequently. It means the process was already unstable, and your previous sampling schedule was insufficient to reveal it.

You can also recognize this mechanism through the structure of your own data. Look at the standard deviation of your recorded values. If the standard deviation is very small compared to what you would expect from a process running continuously, your sampling interval may be filtering out the very variation you need to see. You are not seeing less variation because the process is more stable. You are seeing less variation because your sampling schedule is missing the unstable periods.

This is closely related to the signal vs noise dynamic. Your sampling schedule can suppress both the meaningful signal and the random noise, leaving behind a deceptively clean dataset.

The audit question is straightforward but often overlooked: what is the ratio between your sampling interval and the shortest duration of a meaningful process deviation? If your sampling interval is one hour and a bath refill takes twenty minutes and shifts temperature by three degrees, your sampling schedule is designed to miss that event most of the time.

Detection Probability by Sampling Interval

How sampling interval and deviation duration interact to determine detection probability. As the gap between samples grows relative to event duration, the probability of detection drops sharply.

| Sampling Interval | Short Deviation Duration | Detection Probability |

|---|---|---|

| 30 minutes | 15 minutes | Moderate: partial overlap likely |

| 1 hour | 15 minutes | Low: requires precise timing |

| 1 hour | 30 minutes | Moderate: half the deviation captured |

| 1 hour | 2 minutes | Very low: nearly impossible to catch |

| 2 hours | 15 minutes | Minimal: almost certainly missed |

| 4 hours | 15 minutes | Negligible: deviation invisible to data |

The table above is illustrative, not prescriptive. The point is structural: as the gap between your samples grows relative to the duration of the events you need to detect, the probability of detection drops sharply. This is a function of the relationship between interval and deviation duration, not a function of how many data points you collect in total.

Implementation Tip: When investigating unexplained quality failures, review your sampling schedule before reviewing your chemistry. The data may be accurate, but accurate data collected at the wrong frequency cannot tell you what happened between the samples.

📉 When stable-looking data hides scrap, rework, and blind spots

The cost of undetected deviations is rarely visible in the data that triggered the investigation. This is the central paradox of sampling gaps: the very mechanism that hides the problem also hides the cost of the problem.

You pay twice: once in scrap or rework, again when the root cause never shows up in the record.

When a batch fails due to a deviation that your sampling schedule missed, the investigation typically follows the path of available evidence. The data looks normal. Chemistry checks out. Equipment diagnostics show no faults. The investigation may turn to raw material variation, operator technique, or environmental factors. The sampling frequency is almost never on the short list, because the data provides no signal pointing to it.

This creates a compounding cost structure. The immediate cost is the failed batch, scrap, rework, or customer reject. But the hidden cost is the inability to prevent the same failure from recurring, because the root cause analysis did not identify the sampling gap as a contributing factor. This is the core of the late detection cost dynamic. The cost isn’t just the scrap; it’s the inability to learn from the failure because the mechanism that caused it remains invisible.

Over time, this pattern can erode process capability without any single event triggering a formal corrective action. The process is not getting worse. It is the same process it was before. But your ability to detect and address variation is degrading as the gap between your sampling schedule and the actual dynamics of the process widens.

The audit impact is another dimension. When an auditor reviews your process data and sees consistent readings within control limits, they have no reason to question process stability. The sampling frequency of your data collection is rarely examined as part of an audit trail. The data that exists is accurate, well-documented, and properly controlled. The data that does not exist. The gaps between your samples. They are invisible to the audit review.

This is not a criticism of audit practices. It is a recognition that standard audit methodologies evaluate the data that is presented to them, not the data that was not collected. Your sampling frequency determines what the audit sees, and if that frequency is too coarse, the audit will see a stable process even when instability exists.

The financial impact compounds across multiple dimensions. Scrap from undetected deviations. Rework costs from parts that partially meet specification. Customer relationship damage from inconsistent quality that cannot be traced to a specific corrective action. And the opportunity cost of management attention directed toward investigating chemistry and equipment issues when the real constraint is the data collection schedule.

🚩 Mistakes that keep the sampling gap invisible

❌ Equating stable data with stable process. When your control charts show consistent readings, the natural assumption is that the process is running well. This assumption is only valid if your sampling frequency is sufficient to capture meaningful process deviations. Otherwise, you are mistaking data stability for process stability.

❌ Using fixed intervals for processes with variable dynamics. A one-hour sampling interval may be adequate during steady-state production but completely inadequate during batch changeovers, bath refills, or equipment startups. Processes with variable dynamics require sampling schedules that account for periods of higher variation, not just average conditions.

❌ Increasing sampling frequency without understanding the mechanism. Some teams respond to quality issues by collecting data more frequently. This can reveal hidden variation, but it also increases data volume without necessarily improving decision quality. Understanding the relationship between your sampling interval and your process deviations is more important than simply collecting more data points.

❌ Treating missing data as evidence of no event. When a scheduled data point is skipped or a sensor goes offline, the common interpretation is that nothing unusual happened during that period. Missing data is not evidence of stability. It is evidence of uncertainty. The difference matters when you are trying to determine whether a process deviation occurred but was not recorded.

A one-size schedule can look responsible while it systematically skips the moments that matter.

Key Takeaway: The sampling frequency of your data collection is a process parameter in its own right. It deserves the same attention and periodic review as any other control variable in your operation.

🔗 Related Resources

- When Monitoring Should Turn Into Action: Explores trigger points for intervention and the timing of decisions based on process data

- Signal vs Noise in Process Data: Understanding how to distinguish meaningful variation from random process noise

- Late Detection Cost: Scrap and Rework: The financial impact of delayed detection of process deviations

- Understanding SPC Parameters: Core definitions and interpretations of statistical process control parameters

External Links

- NIST: Interpreting Control Charts: Government-standard guidance on interpreting statistical control charts and control chart patterns

- ASQ: Variation (Common vs Special Cause): Foundational quality association resource distinguishing between common cause and special cause variation

- AIAG: Statistical Process Control: Industry-standard manual covering SPC principles and practical application in manufacturing environments