The Problem With Averages in Process Data | Lab Wizard

Table of Contents

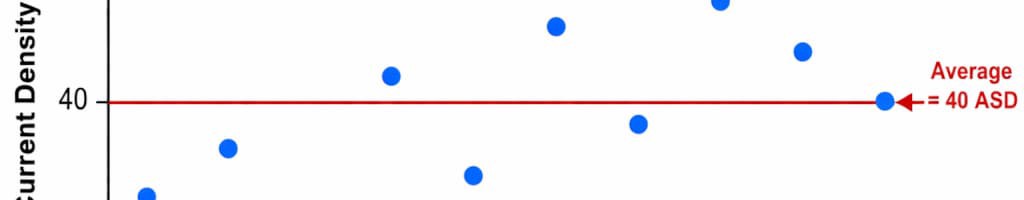

In surface finishing and electroplating, averages show up everywhere: mean bath analysis for the day, mean thickness for a lot, mean pH from rounds, mean cleaner concentration for the week. Those summaries are convenient, but they are not the same thing as knowing whether the process behaved.

When variation is folded into a single number, you lose the evidence operators and inspectors actually live with: spikes, drift, unequal rack to rack results, and the occasional bad run that still “averages out.”

🎯 How averages destroy signal detection

An average answers one question: where is the middle? It does not answer: how wide was the spread, did it change through the shift, and did any individual units see a bad window?

Imagine ten thickness readings on consecutive panels from the same metal finishing line (same nominal process, same target band). The numbers are in microns:

| Panel | Thickness (µm) |

|---|---|

| 1 | 8.1 |

| 2 | 8.9 |

| 3 | 8.0 |

| 4 | 9.6 |

| 5 | 8.3 |

| 6 | 7.7 |

| 7 | 8.8 |

| 8 | 7.9 |

| 9 | 9.2 |

| 10 | 7.5 |

Mean thickness: about 8.6 µm, often “close enough” on paper.

What the line felt: a 2.1 µm spread from lowest to highest panel. That is not a subtle rounding error in plating; it is the kind of spread that shows up as brightness differences, edge buildup, or coupon failures, even when the lot average looks fine.

If you only publish the mean, you miss:

- Individual panels near the edge of customer requirements

- A run that wanders high early and low late (the mean hides the swing)

- The difference between “centered noise” and “something changed mid run”

📊 Valid vs invalid use of averages

| Context | Valid averaging | Invalid averaging |

|---|---|---|

| Time | Summaries for long horizons when raw samples are preserved | Rolling together startup, steady production, and cleanup into one shift mean |

| Space | Replicates at the same process condition (true duplicates) | Mixing high and low current areas, first vs last rack, or different tanks |

| Subgrouping | Rational subgroups aligned to batches, racks, or controlled sampling plans | Arbitrary “every 10th row” grouping that scrambles cause and effect |

| Reporting | Executive rollups with drill down to individuals | Replacing the operational view operators need with a single green number |

| Detection | Never the primary tool | Using means to decide whether the process “was OK” after a quality hit |

🔍 What averages hide in plating and metal finishing

Hidden drift

A bath can glide from one side of the working window to the other across a shift. The daily mean can look steady while every hour was different. In electroplating, that often means operators chase symptoms (additions, brightener tweaks) because the summary said “fine,” not because the trajectory was fine.

Masked excursions

Short events (pump cavitating, filter changeover, heat exchanger hiccup, interrupted agitation) can affect a handful of loads. Averaging across the interval turns those minutes into a small dent in a big number. The people on the floor remember the event; the report often does not.

Concealed special causes

Lab splits that disagree, out of family titration endpoints, a mislabeled makeup drum, or racking density that changed mid-job all leave fingerprints in individual results. Means absorb those fingerprints into background noise, which makes root cause work slower and more argumentative.

🛠 What to use instead of averages

Individual charts (X-MR)

For many surface finishing parameters, an individuals chart with a moving range is the clearest daily tool: every measurement stays visible, and the moving range highlights sudden jumps between consecutive readings.

Example moving ranges from the thickness series above (|Δ| between consecutive panels):

| Step | Thickness (µm) | MR |

|---|---|---|

| 1 | 8.1 | n/a |

| 2 | 8.9 | 0.8 |

| 3 | 8.0 | 0.9 |

| 4 | 9.6 | 1.6 |

| 5 | 8.3 | 1.3 |

| 6 | 7.7 | 0.6 |

When MR climbs, treat it as a prompt to verify sampling, racking, chemistry, and measurement repeatability, not as “stats for stats’ sake.”

Rational subgrouping (when you must subgroup)

If you use averages inside a charting method, the subgroup has to match reality:

Reasonable:

- Multiple readings from the same rack position and time window

- Duplicate lab pulls from the same well mixed sample

- Multiple spots on a coupon that represent one controlled step

Risky:

- One number from first article, one from last article, one from a different customer, all averaged

- Mixing weekend idle time with Monday production in the same summary bucket

Retain raw data always

Policy rule: no summary without traceability. If a mean ships to a customer report or management scorecard, the underlying readings should be retrievable by time, tank, line, and part family. That is what lets you defend good work and fix real problems quickly.

⚠️ Common mistakes with averages

❌ Mistake 1: Treating shift or daily means as proof of statistical control

A mean can sit still while variance doubles. Control is about behavior over time, not one aggregated bar on a dashboard.

❌ Mistake 2: Averaging across different intentional conditions

Different alloys, thickness targets, or line speeds belong in different tracks, not one blended “plant average” that nobody can act on.

❌ Mistake 3: Trusting a smoothed PLC, MES, or LIMS feed without spot checking raw samples

Smoothing is often a display default. The number on the screen may be mathematically honest and still operationally misleading.

❌ Mistake 4: Building limits from the wrong data structure

Limits must match how you sample. Averages of the wrong subgroup width can narrow or widen limits in unhelpful ways.

❌ Mistake 5: Assuming a stable average means a stable process

The pain in metal finishing usually lives in the tails and the timing of change, not in the center of the distribution.

📋 Implementation checklist

Use this to audit average-heavy monitoring in electroplating and surface finishing:

- Dashboards expose individual readings (or clear drill down), not only means

- Reports label the time window and sample count behind every average

- Raw logs are retained with timestamps and context (tank, operator, instrument)

- SPC uses individuals or rational subgroups, not convenience buckets

- Shift handoff calls out spread and last hour behavior, not only the shift mean

- After any quality event, the team pulls individuals first, means second

Why this matters in Lab Wizard

A summary is not a substitute for the record.

Lab Wizard is built around individual measurements, history, and alerts, so surface finishing teams can see drift and spikes while there is still time to act.When you stop letting averages tell the whole story, you get:

- Earlier detection: signals surface in individuals and patterns before the mean moves.

- Cleaner investigations: traceability from chart points back to actual pulls and tanks.

- Stronger audits: you can show what was known and when in customer and NADCAP style reviews.

Use the checklist above to find where means are masking variation, then let Lab Wizard carry the detailed signal forward.

Related resources and references

Lab Wizard resources:

- Why Most Process Data Is Looked At Too Late – Close the gap between data existing and data being used

- When Monitoring Should Turn Into Action – Turn signals into accountable next steps

- Signal vs. Noise in Process Data – Separate real change from measurement and routine variation

- Understanding SPC Parameters: Cp, Cpk, Cpm, Pp, and Ppk Explained – Connect charts to capability language

External references:

- NIST Engineering Statistics Handbook – Control Chart Basics

- ASQ: Variation (Common Cause vs. Special Cause)

- ASQ: Seven Basic Quality Tools

Keep the raw measurements in the story, then let Lab Wizard turn that history into charts, alerts, and audit ready context your team can trust.

Key takeaways

- Averages answer center; plating quality is driven by spread, timing, and tails.

- Use individuals and moving range (X-MR) for many electroplating parameters, or rational subgroups when the subgroup matches real process logic.

- Never drop raw data behind a mean if you expect to investigate defects or defend runs.

- Audit dashboards and reports for hidden smoothing, especially shift and daily rollups.

- If the mean looks fine but the floor does not, you are almost always looking at the wrong summary, not a mystery process.